A Citrix Active/Active Deployment Part 2

Since Part 1 has been published we have learned a little bit more about the overall design of the environment. Let’s recap the initial requirements.

- Management has instructed the team to build a Highly Available and Stable environment that spans multiple data centers.

- Each technology component must be separate in each datacenter. Which means, no sharing of Citrix resources for the infrastructure type roles (Broker, Storefront, etc…).

- We need to be able to test changes in One Datacenter, while not affecting the other datacenter.

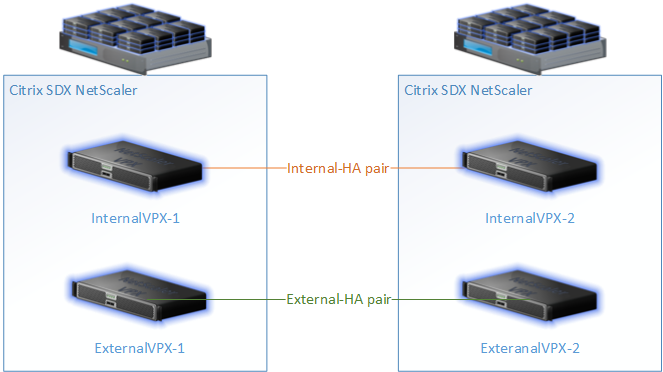

Netscalers: We will have 2 SDX Netscalers at each datacenter. On each SDX, there will be 1 VPX that’s in an HA pair with a VPX on the 2nd SDX (that houses the Netscaler Gateway, GSLB configuration, Load Balancers, etc…). Similar to the screenshot below.

Storage and Hosts: There will be hosts and storage placed at each datacenter. A combination of ESXi and XenServer will be used for the hypervisor. ESXi will house the infrastructure roles (storefront, delivery controller, PVS servers, etc…). XenServer will house the session VMs (“Friends don’t let friends get vTax’ed”). We will have Fiber connection Dell Compellant at each datacenter. This will provide the storage for our VMs, PVS Stores, etc… User files will reside in one datacenter and will be replicated to 2nd datacenter as ‘read only’. Should the primary DC go down, the secondary would take over. This means no matter what datacenter a user logs into, they will access their redirected folders, my documents, etc… in the 1st datacenter. Note: this is a limit of our storage platform which follows the master/slave rules. This may change over time.

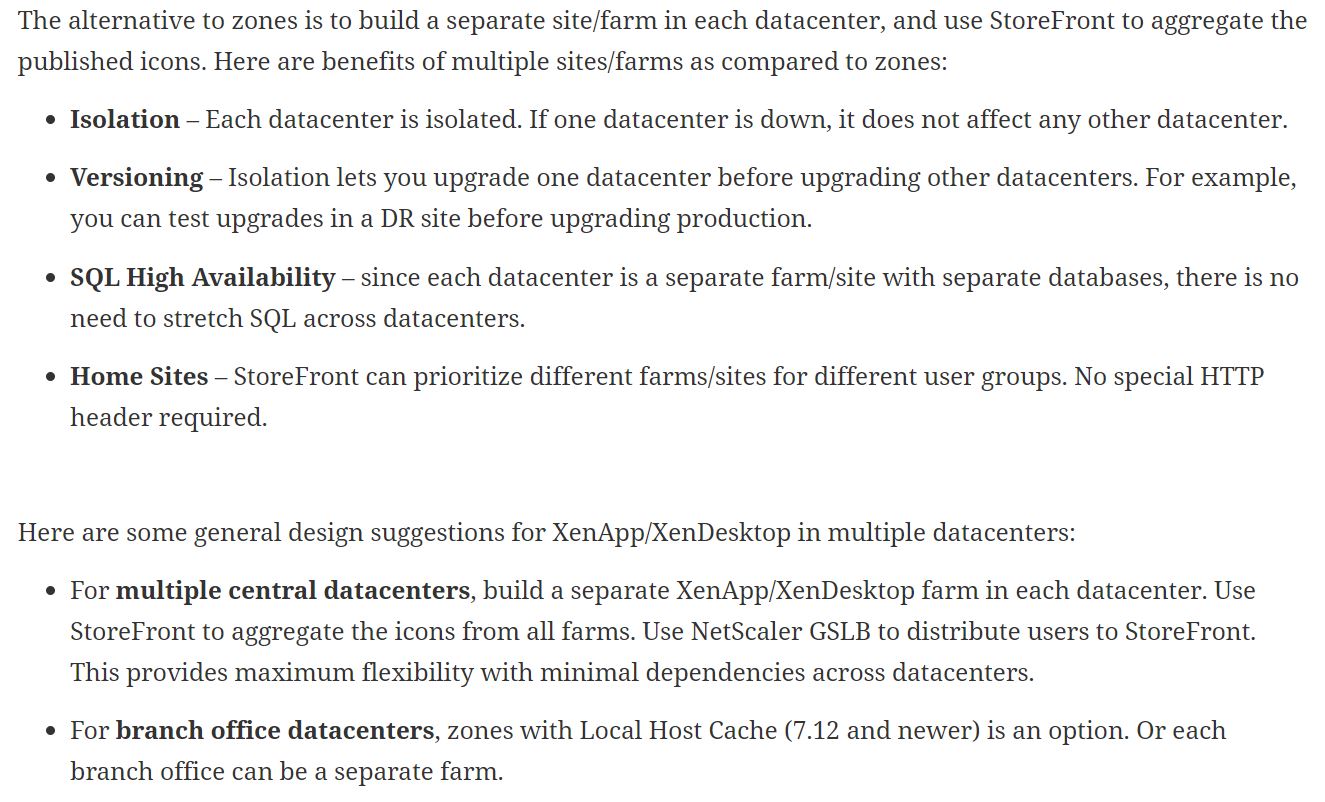

XA/XD Sites: We chose to deploy two separate sites, one in each DC. Based on Carl Stalhood’s article (screenshot below) and Chris Gilbert’s article, we could technically get away with deploying 1 site, however we chose to separate this into two due to failure domains. This will allow us to completely test Site upgrades/updates, PVS image updates, etc… without affecting both datacenters.

Provisioning Services: Each datacenter will have 2 PVS servers for HA. Each side will connect to their respective SMB share, local to that DC. We will most likely use a powershell script to copy vdisks across.

Now that we have a better picture of the design, the hosts and storage are setup, and a basic picture of the configuration we’ll begin building out the VMs in the 2nd datacenter. This will look pretty much identical to the first datacenter.

- 2x Delivery Controllers

- 2x SQL severs (Always On)

- 2x PVS Servers

- 2x Storefront Servers

- 2x Director Servers

- 1x Licensing Server

Once I have these VMs built and installed, along with the Netscaler SDX/VPX, I’ll dive a bit deeper on configuring the ‘multi-site’ components such as Delivery Controller, Storefront, and Netscaler configuration.

In the meantime we have some fine tuning/hardening around sync’ing: Site settings, Storefront subscriptions/stores, and PVS images. We also need to come up with game plan for multi-site Ivanti/Appsense setup.

Stay tuned for Part 3.

________________________

Part 2 (this blog)

Hi,

interesting reading because we are doing a similar HA design right now with two completely isolated XA7.15 sites incl. NS, etc; the biggest hurdle we see is the replication of user data & profiles – already any thoughts on that?

BR,

Michael

Thanks Michael. As far as user data/profiles, this is something i’ll get more into when we have a more concrete solution. What is most likely going to happen is this. We have Dell Compellent storage at each Datacenter. One of the NAS/Compellent devices will act as primary, the 2nd NAS/Compellent device will act as secondary and will receive synchronization from Primary. This means that a user in Datacenter#2 could go across the link to Datacenter#2 for their user files. If Datacenter#1 went down completely, we would flip primary/secondary. As far as user profiles go, we use Appsense. Both sides will have their own profile database, these databases will ‘sync’ (an appsense script is run for this on a schedule) at night. So this opens up the door for possible profile loss if User1 logs into Datacenter#1, then logs out, and logs back into Datacenter#2 in the same day. We will be fine tuning both of these processes. Hope this helps a bit, stay tuned for more information and please let me know what else you’d like to hear about.